Hi Everyone,

I am an Embedded Systems Engineer. My hobby is developing real-time music visualization technology. The technology allows the creation of fully autonomous intelligent electronic devices that can interpret the musical audio stream into visual light images.

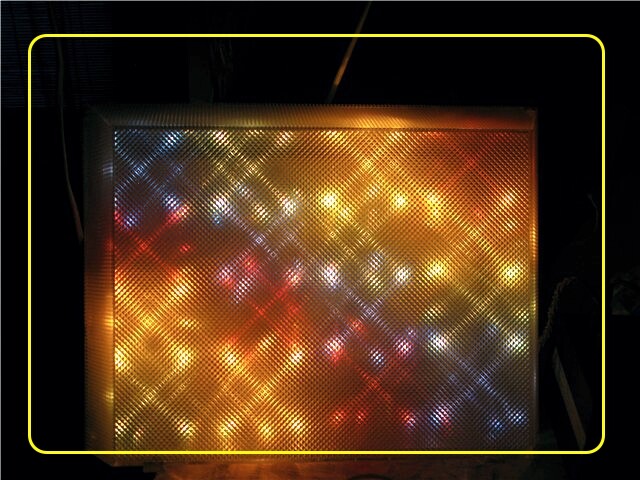

I call the technology itself RTMV-technology. Initially, RTMV-technology was developed as a 2D visualization option. But to create a full-fledged 2D prototype today, for me, it turned out to be quite expensive to manufacture. Therefore, an attempt was made to a simplified version - 1D visualization. The idea was to get a relatively inexpensive instrument that you can simply connect an audio signal to and enjoy the light images while listening to music.

This project was called CLUBBEST and was initially focused on club music, but as time has shown, it is suitable for visualizing any piece of music. Of course, there are many limitations associated with using inexpensive components and using a low performance MCU, but even in this case, I received a lot of positive feedback. For me, the most inspiring reviews were when, after watching, subscribers write - “I saw the music.” Yes, I created this technology in order to - See the music. And I get that kind of feedback all the time as the technology develops.

Initially, I made CLUBBEST as a tool for my PC to listen to music. But as it turned out, it can be successfully used for the workplace of a composer, musician, DJ, and also used as a decorative decoration for the interior of clubs, cafes, restaurants.

You can find a video of the work of visualization technology for musical works on my YouTube channel https://www.youtube.com/@CatcatcatElectronics . On this channel you can find a video of the prototypes of the two systems - CLUBBEST M 68 and CLUBBEST M 100.

I believe that already at the existing level of development it is already possible to create a commercial instrument for DJ that could be used for live performances of musicians. Such an instrument can be placed in front of the DJ console and the audience (or behind the DJ left and right of it). I believe that light interpretation enhances the perception of a piece of music several times over.

The peculiarity of the idea itself is that, for example, a DJ would give the ability to focus on music in a live performance, and give the visualization to electronics.

Now in the prototypes , the final optical device , there is a linear arrangement of the same type of light sources. To work with lighting fixtures that are used on the stage , a configurator is naturally needed in which the user must specify the type of device and its location. For RTMV-technology it is important to know how light sources are located relative to the viewer , their type and their functionality.

I’m currently trying to contact inMusic to interest them in the development of this technology and the creation of new tools that will allow you to take a different look at the light SHOW.

But now, it would be important for me to hear your opinion on how light visualization correctly or closely expresses the theme of a piece of music. In the latest videos, on my channel, I tried to collect different styles so that it would be possible to evaluate the possibility of the algorithms.

If you have any questions, feel free to ask, I am ready to provide more technical information to understand the very idea of visualization.

I will be grateful for your feedback.